Physically Based Renderer

Built as part of the computer graphics curriculum at Charles University, using the Nori educational ray tracing framework. The project progresses from fundamental Monte Carlo sampling to a full path tracer with multiple importance sampling.

What was implemented

Sampling foundations — Inverse CDF derivations and implementations for tent, uniform disk, uniform sphere, uniform hemisphere, cosine-weighted hemisphere, and Beckmann distribution sampling. Each validated with chi-squared tests.

Materials — Diffuse (Lambertian), perfect mirror, dielectric (glass with Fresnel reflection/refraction), and Beckmann microfacet BRDF.

Integrators — Built incrementally:

- Ambient occlusion

- Whitted-style ray tracing with recursive specular bounces and Russian roulette

- Path tracer with BSDF sampling only

- Path tracer with next event estimation (explicit light sampling)

- Path tracer with multiple importance sampling using the balance heuristic — combining BSDF and light sampling with proper measure conversion between solid angle and surface area

Area lights — Emitter sampling using a discrete PDF over triangles weighted by surface area, with uniform barycentric coordinate sampling.

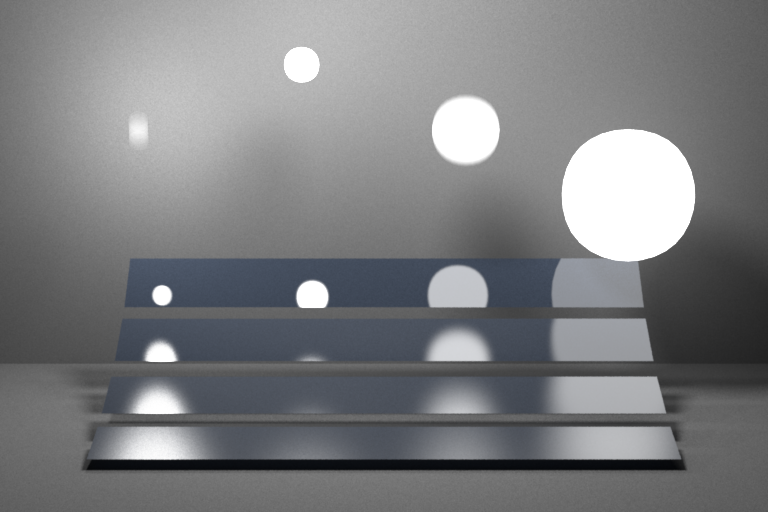

Motion blur & animation — A custom Python pipeline that generates time-parameterized scenes and averages temporal samples within a shutter interval. Required a custom xorshift32 PRNG to decorrelate temporal noise from Nori’s built-in sampler. The video above shows oscillating light spheres over microfacet plates rendered with the MIS path tracer.

Code — Due to strict internal policy against sharing source code, this project lives as a private repo on the faculty github.